SICK launches Monitoring Box for cloud-based sensor monitoring

SICK released its Monitoring Box software that enables access to sensor data for cloud-based monitoring. | Source: SICK

SICK has launched a product called Monitoring Box that enables access to sensor data for cloud-based condition monitoring. It visualizes status data from SICK sensors, offering customers added value from previously unused sensor data. The monitoring box allows for the visualization of internal device parameters to diagnose and monitor fault conditions. It consists of a browser application, server-side data management, an IoT Gateway and suitable predefined sensor apps for simple connection of SICK sensors.

Condition monitoring is the visualization and interpretation of vital parameters of machinery and sensors in real-time. This provides a few key benefits, including increased reaction time, reduction of downtimes, and reduction of costs for unplanned service interventions.

The Monitoring Box includes a Smart Service Gateway from SICK. This allows for short time storage, pre-processing of data, and secure data transmission. A cloud service is also provided for data security and long-term data backup. Lastly, the Monitoring App provides application-specific support for sensors and machinery. These are plug and play apps that are available as a subscription.

The comprehensive dashboard provided by Monitoring Box provides an overview of the status, name, and location, which includes job recommendations. Live status data provides all relevant data in real time. This includes device information and status, operating mode, and other data points that help to detect errors and malfunctions.

In addition, historical data is available to understand past issues and better predict the future. And lastly, issue/alert logs are also available and can be saved within a dedicated log. This log helps to provide an overview of past alerts and determine any irregularities within the device or process that can be detected and analyzed. The type of alerts can be customized based on the needs of the user.

In summary, the Monitoring Box provides:

- Cloud based condition monitoring platform – connect sensors and other data sources to your monitoring application

- Pre-defined Monitoring Apps – start using the software without programming

- User friendly Dashboard – can be used on mobile and desktop

- Alerting via Email – don‘t miss anomalies or warnings

- Gain insights – with Job Recommendations you receive recommendations for action

- Historical data – to help diagnose, interpretation is the basis for predictive maintenance

- Password protected access – via SICK ID

- Lower investment level – subscription updates & patches included

- All-in-one solution for condition monitoring – cloud, gateway, and App

The Monitoring Box is available for several SICK sensors, including safety and non-safety LiDAR, vision technology, barcode readers, RFID, and more.

The post SICK launches Monitoring Box for cloud-based sensor monitoring appeared first on The Robot Report.

Engineers create single-step, all-in-one 3D printing method to make robotic materials

A technique to teach bimanual robots stir-fry cooking

Robotic lightning bugs take flight

Emergency-response drones to save lives in the digital skies

Robots found to turn racist and sexist with flawed AI

Engineers devise a recipe for improving any autonomous robotic system

Artificial skin gives robots sense of touch and beyond

Top 10 robotic stories of May 2022

May was full of exciting developments in the robotics world. From NASA nearing the retirement of its InSight Lander, to IP acquisitions, to Festo introducing a pneumatic cobot arm, there was no shortage of things to cover this month.

Here are the Top 10 most popular robotics stories on The Robot Report in May 2022. Subscribe to The Robot Report Newsletter to stay updated on the robotics stories you need to know about.

10. 3 leading US robotics clusters create alliance

MassRobotics, Pittsburgh Robotics Network and Silicon Valley Robotics have formed the United States Alliance of Robotics Clusters (USARC). USARC supports the development, commercialization and scaling of robotics for global good by collaborating with government and industry stakeholders. Read More

9. Mercedes rolls out Level 3 autonomous driving tech in Germany

Mercedes-Benz launched sales of its Drive Pilot system in Germany. The system is capable of operating at SAE Level 3 autonomy and can be ordered for the company’s S-Class and all-electric EQS models. Mercedes’ system was approved by the German Federal Motor Transport Authority (KBA) for Level 3 autonomy in December 2021. The approval allows Drive Pilot to take over driving in certain areas and at speeds of up to 60 km/h, around 37 mph, as long as someone is in the driver’s seat and prepared to take control of the vehicle at any time. Read More

8. Qualcomm unveils RB6 platform and RB5 AMR reference design

Qualcomm announced the availability of its Robotics RB6 Platform and the RB5 Reference Design. The two products help to bring advanced artificial intelligence (AI) and 5G to robotics, drones and intelligence machines. The RB6 Platform is made up of both hardware and software development tools. Read More

7. Boston Dynamics upgrades Spot with faster charging, new payloads, 5G

Boston Dynamics announced the latest upgrades for its popular quadruped robot Spot. The upgrades include faster charging times, more payloads and support for 5G connectivity. Boston Dynamics has added additional payloads to the quadruped to help it operate in more areas. The Spot CORE I/O is a high-efficiency computer payload that gives Spot the ability to process data in the field. Read More

6. Verizon shutting down Skyward drone management company

Skyward, a drone management company owned by Verizon, is shutting down. The company’s drone software platform helped customers manage their entire drone workflow, including training crews, planning missions, accessing controlled airspace and more. Skyward began in 2013 in Portland, Oregon, and was acquired by Verizon in 2017. Read More

5. Savioke is now Relay Robotics

5. Savioke is now Relay Robotics

Relay Robotics launched. It is a new corporation formed by acquiring Savioke, a developer of mobile delivery robots. The people, intellectual property, the Relay product line, and all customer agreements have all come over to Relay Robotics. Relay Robotics also announced the completion of a $10M Series A financing and the appointment of veteran technology executive Michael O’Donnell as chairman and CEO. Read More

4. Festo introduces pneumatic cobot arm

Festo announced its pneumatic collaborative robot (cobot) arm at the Festo TechTalk 2022. The company plans to make the cobot commercially available in 2023. The cobot uses six pneumatic direct drives, instead of the typical electric motors and mechanical transmission, to move. Each of the six drives consists of a circular chamber with a moveable partition. Differences in air pressure on either side of the partition wall in the chamber cause the it to shift, which then moves the joint. Read More

3. DeepMind’s open-source version of MuJoCo available on GitHub

DeepMind, an AI research lab and subsidiary of Alphabet, in October 2021 acquired the MuJoCo physics engine for robotics research and development. The plan was to open-source the simulator and maintain it as a free, open-source, community-driven project. According to DeepMind, the open sourcing is now complete, and the entire codebase is on GitHub. Read More

2. John Deere acquires camera-based perception tech from Light

John Deere has made another acquisition related to the development of autonomous tractors. John Deere has acquired numerous patents and other intellectual property from Light, which specializes in depth sensing and camera-based perception for autonomous vehicles. John Deere also hired a team of employees from Light. Financial terms of the deal are unknown. Read More

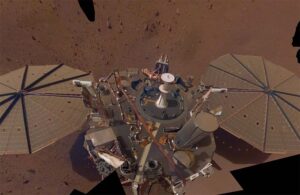

1. NASA’s InSight Lander nearing end of journey on Mars

1. NASA’s InSight Lander nearing end of journey on Mars

NASA’s InSight Lander touched down on the surface of Mars on November 26, 2018 after a six-month journey from Earth. NASA’s primary goal for the lander was to glean a better understanding of how terrestrial planets, like Earth and Mars, formed and evolved. Over three years later, InSight has achieved all of its primary science goals. Read More

The post Top 10 robotic stories of May 2022 appeared first on The Robot Report.